Archive

Generating (a lot of) Data

In my previous post I introduced the Osiris project that I’ve started working on and outlined the basic construction system for the planet I’m trying to procedurally build. With that set up, the next step was to create a system for generating and storing the multitude of data that is required to represent an entire planet.

Now planets are pretty large things and the diversity and quantity of data that is required to represent one at even fairly low fidelity gets very large very quickly. A requirement of this system though is that I want to be able to run my demo and immediately fly down near to the surface to see what’s there – I don’t want to have to sit waiting for minutes or hours while it churns away in the background building everything up.

For this to work I obviously need some form of asynchronous data generation system that can run in the background spitting out bits and pieces of data as quickly as possible while the main foreground thread is dealing with the user interface, camera movement and most importantly rendering the view.

This fits quite well with modern CPUs where the number of logical cores and hardware threads is continuing to rise providing increasing scope for such background operations, but that does also mean that the data generation system needs to be able to run on an arbitrary number of threads rather than just a single background one. An added bonus of such scalability is that time can even be stolen from the primary rendering thread when not much else is going on – for example when the view is stationary or when the application is minimised.

Ultimately this work should be able to be farmed off to secondary PCs in some form of distributed computing system or even out into the cloud – but to support that data generation has to be completely decoupled from the rendering and able to operate in isolation. Even if such distribution never happens though designing in such separation and isolation is still a valuable architectural design goal.

So I need to be able to generate data in the background, but to achieve my interactive experience I also need it to be generating the correct data in the background, which in this case means that at any given moment I want it to be generating data for the most significant features that are closest to the viewpoint. This determination of what to generate also needs to be highly dynamic as the viewpoint can move around very quickly – thousands or even tens of thousands of miles per hour at times – so it’s no good queuing up thousands of jobs, the current set of what’s required needs to be generated and maintained on the fly.

Finally as generation of data can be a non-trivial process the system needs to be able to cache data it’s already generated on disk for rapid reloading on subsequent runs or even for later on in the same run if the in-memory data had to be flushed to keep the total memory footprint down. I can’t simply cache everything however as for an entire planet the amount of data for the level of fidelity I want to reproduce can easily run into terabytes so it’s important to only cache up to a realistic point – say a few gigabytes worth – with the rest being always generated on demand.

To maximise the effectiveness of disk caching I also want to include compression in the caching system – the computation overhead of a standard compression library such as zlib shouldn’t be exorbitantly expensive compared to the potentially gigabytes of saved disk space.

This is quite a shopping list of requirements of course, which brings home the unavoidable complexity of generating high fidelity data on a planetary scale, but even non-optimal solutions to these primary requirements should allow me to build on top of such a generic data generation system and start to look at the planetary infrastructure generation and simulation work that I am primarily interested in.

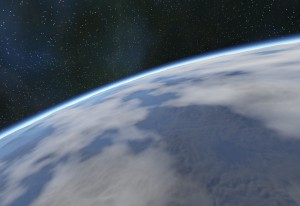

Unfortunately of course data generation architecture lends itself only so well to pretty pictures so rather than some dull boxes and lines representation of data flow the images with this post show the atmospheric scattering shader that I’ve also recently added – it’s probably the single biggest improvement in both visual impact and fidelity and suddenly makes the terrain look like a planet rather than just a textured ball – more on this atmospheric shader in a later post.

Captain Coriolis

Continuing the theme of clouds, I’ve been looking at a few improvements to make the base cloud effect more interesting, so with the texture mapping sorted what else can we do with the clouds? Well because the texture is just a single channel intensity value at the moment we can monkey around with the intensity in the shader to modify the end result.

The first thing we can do is change the scale and threshold applied to the intensity in the pixel shader. The texture is storing the full [0, 1] range but we can choose which part of this to show to provide more or less cloud cover:

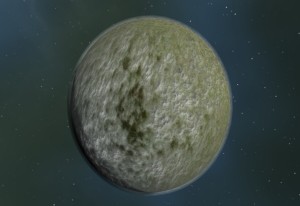

Here the left most image is showing 50% of the cloud data, the middle one just 10% and the right hand one 90%. Note that on this final image the contrast scalar has also been modified to produce a more ‘overcast’ type result. Because these values can be changed on the fly it leaves the door open to having different cloud conditions on different planets or even animating the cloud effect over time as the weather changes.

Next I thought it would be interesting to add peturbations to the cloud function itself to break up some of it’s unrealistic uniformity. First a simple simulation of the global Coriolis Effect that essentially means that the clouds in the atmosphere are subject to varying rotational forces as they move closer to or further away from the equator due to the varying tangental speeds at differing lattitudes.

The real effect is of course highly complex but with a simple bit of rotation around the Y axis based upon the radius of the planet at the point of evaluation I can give the clouds a bit of a twist to at least create the right impression:

Here the image on the left is without the Coriolis effect and the image on the right is with the Coriolis effect. The amount of rotation can be played with based on the planet to make it more or less dramatic but even at low levels the distortion in the cloud layer that it creates really helps counter-act the usual grid style regularity you usually get with noise based 3D effects.

With the mechanism in place to support the global peturbation from the Coriolis effect, I then thought it would be interesting to have a go at creating some more localised distortions to try to break up the regularity further and hopefully make the cloud layer a bit more realistic looking or at least more interesting.

The kind of distortions I was after were the swirls, eddies and flows caused by cyclical weather fronts, mainly typhoons and hurricanes of greater or lesser strength. To try to achieve this I created a number of axes in random directions from the centre of the planet each of which had a random area of influence defining how close a point had to be to it to be affected by it’s peturbation and a random strength defining how strongly affected points would be influenced.

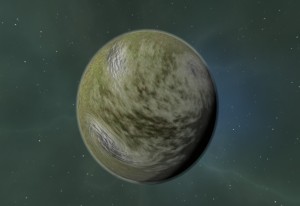

Each point being evaluated is then tested against this set of axes (40 currently) and for each one if it’s close enough it is rotated around the axis by an amount relative to it’s distance from the axis. So points at the edge of the axis’ area of influence hardly move while points very close to the axis are rotated around it more. This I reckoned would create some interesting swirls and eddies which in fact it does:

It can produce some fairly solid looking clumps which is not great but on average I think it adds positively to the effect. (Looking at the type of distortion produced I suspect it may also be useful for creating neat swirly gas planets or stars with an appropriate colour ramp – something for the future there I hope).

So far I’ve got swirly white clouds but to make them seem a bit more varied the next thing I tried was to modulate not just the alpha of the cloud at each point but the shade as well. At first I was going to go with a second greyscale fBm channel in the cube map using different lacunarity and scales to produce a suitable result, but then I thought why not use the same channel but just take a second sample from a different point on the texture. This worked out to be a pretty decent substitute, by taking a sample from the opposite side of the cube map from the one being rendered and using this as a shade value rather than an alpha it introduces some nice billowy peturbations in the clouds that I think helps to give them an illusion of depth and generally look better:

The main benefit of re-using the single alpha channel is it leaves me all three remaining channels in my 32bit texture for storing the normal of the clouds so I can do some lighting. Now for your usual normal mapping approach you only need to store two components of the surface normal in the normal map texture as you can work out the third in the pixel shader, but doing this means you lose the sign of the re-constituted component. This isn’t a problem for usual normal mapping where the map is flat and you use the polygon normal, bi-normal and tangent to orient it appropriately, but in my case the single map is applied to a sphere so the sign of the third component is important. Fortunately having three channels free means I can simply store the normal raw and not worry about re-constituting any components – as it’s a sphere I also don’t need to worry about transforming the normal from image space to object space.

Calculating the normal to store is an interesting problem also, I tried first using standard techniques to generate it from the alphas already stored in the cube map but this understandibly produced seams along the edges of each cube face. Turns out a better way is to treat the fBm function as the 3D function it is, and use the gradient of the function at each point to calculate the normal – this is essentially the same way as the normals are calculated for the landscape geometry during the marching cubes algorithm.

With the normal calculated and stored, I then put some fairly basic lighting calculations into the pixel shader to do cosine based lighting. The first version produced very harsh results as there isn’t really any ambient light to fill in the dark side of the clouds so I changed it to use a fair degree of double sided lighting to smooth out the effect and make it a bit more subtle/believable.

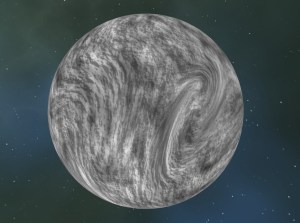

Shown below is the planet without lighting, the generated normal map, the lighting component on it’s own and finally the lighting component combined with the planet rendering:

As you can see from the two right hand images there are still some problems with the system as it stands – the finite resolution of the cube map means there are obvious texel artifacts in the lighting where the normals are interpolated for a start – but I think it’s still a worthwhile addition.

Although I’m not 100% happy with the final base cloud layer effect I think that’s probably enough for now, I’m currently deciding what to do next – possibly try positioning my planet and sun at realistic astronomical distances and sizes to check that the master co-ordinate system is working. This is not visually fun but necessary for the overall project although it will probably also require some work on the camera control system to make moving between and around bodies at such distances workable.

More interestingly I might try adding some glare and lens flare to the sun rendering to make it a bit more visually pleasing – the existing disc with fade-off is pretty dull really.

Clouding the Issue

Taking a break from hills and valleys, I thought I would have a go at adding some clouds to the otherwise featureless sky around my planets. There are various methods employed by people to simulate, model and render clouds depending on their requirements, some are real-time and some are currently too slow and thus used in off-line rendering – take a look at the Links section for a couple of references to solutions I have found out on the net.

Although I would like to add support for volumetric clouds at some point (probably using lit cloud particle sprites of some sort) to get things underway I thought a single background layer of cloud would be a good starting point. Keeping to my ethos, I do of course want to generate this entirely procedurally if at all possible and as with so many other procedural effects a bit of noise is a good starting point.

In this case while I get the mapping and rendering of the cloud layer working I’ve opted to use a simple fBm function (Fractional Brownian Motion) that combines various octaves of noise at differing frequencies and amplitudes to produce quite a nice cloudy type effect. It’s not convincing on it’s own and will need some more work later but for now it will do.

Now the fBm function can be evaluated in 3D which is great but of course to render it on the geodesic sphere that makes up my atmosphere geometry I need to map it somehow into 2D texture space – I could use a full 3D texture here which would make it simple but would also consume a vast amount of texture memory most of which would be wasted on interior/exterior points so I’ve decided not to do that. I could also evaluate the fBm function directly in the shader but as I want to add more complex features to it I want to keep it offline.

The simplest way to map the surface of a sphere into 2D is to use polar co-ordinates where ‘u’ is essentially the angle ‘around’ the sphere and ‘v’ the elevation up or down. This is simple to calculate but produces a very uneven mapping of texture space as the texels are squeezed more and more towards the poles producing massive warping of the texture data.

The two images here show my basic fBm cloud texture and that same texture mapped onto the atmosphere using simple polar co-ordinates.

(ignore the errant line of pixels across the planet – this is caused by an error of some sort in the shader that I couldn’t see on inspection and I didn’t want to spend time on it if the technique was going to be replaced anyway)

The distortion caused by the polar mapping is very obvious when applied to the cloud texture with significant streaking and squashing evident eminating from the pole – obviously far from ideal.

One way to improve the situation is the apply the inverse distortion to the texture when you are generating it, in this case treating texture generation as a 3D problem rather than a 2D one by mapping each texel in the cloud texture onto where it would be on the sphere after polar mapping and evaluating the fBm function from that point. As can be seen in the images below this produces a cloud texture that gets progressively more warped towards the regions that will ultimately be mapped to the poles so it looks wrong when viewed in 2D as a bitmap, but should produce better results when mapped onto the sphere:

As you can see in the planetary image, the streaking and warping is much reduced using this technique and the cloud effect is almost usable but if you look closely you will see that there is still an artifact around the pole albeit a much smaller one – it would be significantly larger when viewed from the planet’s surface however so is still not acceptable. One way to ‘cheat’ around this problem is to simply ensure that cloud cover at the pole is always 100% cloud or 100% clear sky to hide the artifact and some released space based games that don’t require you to get very close to the planets do this very effectively, but in a world of infinite planets it’s a big restriction that I don’t want to have to live with.

Another downside of polar mapping is that the non-uniform nature of the texel distribution means that many of the texels on the cloud texture aren’t really contributing anything to the image depending on how close to the pole they are which is a waste of valuable texture memory.

So if polar mapping is out what are we left with? Well next I thought it was time to drop it altogether and move on to cubic mapping, a technique usually employed for reflection/environment mapping or directional ambient illumination. With this technique we generate not one cloud texture but six each representing one side of a virtual cube centred around our planet. With this setup when shading a pixel the normal of the atmospheric sphere is intersected with the cube and the texel from that point used. The benefit of cube mapping is that there is no discontinuity around the poles so it should be possible to get a pretty even mapping of texels all around the planet, making better use of texture storage and providing a more uniform texel density on screen amongst other things.

So ubiquitous is cube mapping that graphics hardware even provides a texture sampler type especially for this so we don’t even need to do any fancy calculations in the pixel shader, we simply sample the texture using the normal directly which is great for efficiency. The only downside is that now we are storing six textures instead of one the memory use does go up, so I’ve dropped the texture size from 1024×1024 to 512×512 but as each texture is only mapped onto 1/6th of the surface of the sphere the overally texel density actually increases and the more effective use of texels means the 50% increase in memory usage is worthwhile.

The two images below show how one face of the texture cube and the planet now looks with this texture cube applied:

Again it’s different but the edges of the cube are pretty clear and ruin the whole effect, so as a final adjustment we again need to apply the inverse of the distortion effect implied by moving from a cube to a sphere and map the co-ordinates of the points on our texture cube onto the sphere prior to evaluating fBm:

Finally we have a nice smooth and continuous mapping of the cloud texture over our sphere. Result! To show how effective even a simple cloud effect like this can be in adding interest to a scene here is a view from the planet surface with and without the clouds:

There is obviously more to do but I reckon it’s a decent start.

Mountains and Ridges

I’ve been looking at different ways to generate terrain recently with a focus on trying to make something that’s a bit more realistic. One of the main problems with Geo’s terrain so far is the same problem that most of my previous experiments have suffered from and the same problem you will see on the majority of procedural landscape demos out there – the landscape is too homogenous, it’s too ‘samey’.

This is usually a result of whatever fractal or noise based function that is used to generate the heights being applied in too uniform a fashion over the whole landscape area, it’s also a result of the fact that most of these functions treat 3D space as a uniform entity so there is no sense of direction and no knowledge of the processes of fault formation and erosion that shape real landscapes.

The image here taken from Geo shows clearly what I mean – a fairly constant lumpiness that isn’t exactly convincing:

Now matters can be improved somewhat by using more noise functions at lower frequencies to control the parameters but you still end up with no directionality and something that while less regular is still far from realistic. This second image is taken from Google Earth and shows the sort of ridges and valleys that I am after but so far lacking:

There are several research papers out there I’ve found so far that present various techniques for producing more physically accurate simulations of terrain based upon studies of real geological formations, wind and water erosion and other climactic factors, but these are invariably very complex, very slow or only model a certain effect in isolation – I really want to develop something that runs while not in real time at least in seconds or minutes rather than hours and I need to understand it completely so I can alter and tweak it to get the effect I want.

Not being a mathematical genius I find many research papers quite impenetrable but what most annoys me is when they are deliberately so – simple coding concepts expressed in complex algebraic formulas for example when half a dozen lines of pseudo-code would make it blindingly obvious to anyone with even rudimentary coding ability. I’m not anti-academia, and I’m certainly not anti-research; I just want people to express their ideas in a form that will *help* others understand and develop them rather than in a form that is apparently designed just to make them look as clever as possible.

Okay, with that personal rant over – being unwilling to get into the complexities of real geological simulation what I thought I would do therefore is have a bit of a go at trying to create something that was closer to real world mountains and valleys but was still controllable with just a couple of parameters. It also had to be quite efficient to generate and combine well with other procedural techniques I may employ.

The plan I came up with was to try to ‘grow’ a set of line segments representing the mountain ridges I wanted then calculate the height of the terrain as a function of the distance of each point from one of these ridges. The ridge line segments are 2D and so can be easily visualized by rendering them to a bitmap but are mapped onto the surface of the planet using a simple transformation.

Shown here is one of the first implementations of this system, it starts with a single point located roughly at the centre from which three ridges head out in randomly chosen directions – although the directions are chosen to not be too close to each other. Each ridge is formed from a number of consecutive segments with a random orientation change applied before each one is added making the ridge meander around in a more convincing fashion. The segments also get progressively lower in altitude and there is a random chance at each step that the ridge will fork into two with each child ridge heading off in a random direction based off the direction of the original.

From this I then had a look at working out height values based on the distance of each point from the closest ridge, the results are shown here both as a grayscale of the height field and as it appears when applied to a planet surface

From this I then had a look at working out height values based on the distance of each point from the closest ridge, the results are shown here both as a grayscale of the height field and as it appears when applied to a planet surface

It was quite an encouraging result and made me feel I was on the right path at least to produce the kind of effect I wanted, but there was obviously much more to be done. The first improvement was to do something about the function that works out the height of a terrain point from it’s distance to the nearest ridge line segment. The image above was generated using a fixed linear distance function but this doesn’t make much sense really as the ‘tail’ end of the segments are much lower in altitude than the roots and so should have a lower influence. Taking this into account by scaling the distance over which a segment has an influence based upon it’s height gives a better result:

Now a single mountain does not a range make, so next I tried generating ridges from multiple points combining the results by taking the highest point at each intersection:

Which looks a bit better, and while as many points as required could be combined in this way, I thought it might be nice to be able to generate ridge line segments all starting off from a connected ‘master ridge’ rather than discrete points. This master ridge formation is controlled by specifying a number of spawn points just like for the images above, but this time rather than treating them independently, the program creates a sequence of connected segments directly between the spawn points:

It’s pretty uninteresting with straight lines between the points, but we can make it more interesting by subdividing these connecting segments into smaller pieces and displacing the newly created intermediate points by some sort of random function. The method I chose is very simple and something of a fractal classic:

• take each straight segment shown above and split it at it’s middle point into two

• displace the newly created middle point by a random distance in a direction perpendicular to the original line

• repeat this process recursively on the two new half-length segments as long as they are longer than some minimum threshold.

What we now get is something a lot more interesting and closer to what we might expect from a mountain ridge line:

Of course we don’t want just one big ridge, so by adding in the spawning of child ridges from the sides of our master ridge we get something a bit more like what we were after at the beginning, a combination of ridges in various directions and sizes but all heading down from our master ridge. These child ridges are set to spawn randomly along the length of the master ridge but head out in a direction roughly perpendicular to the direction of travel of the master ridge at their spawn point so they generally fan out from ridge in a fairly sensible pattern:

Looking at this effect now I reckon it would be even better to spawn some additional children from the end points of the master ridge to get rid of the ‘bulb’ of height influence around those points…something to try I think.

One thing obvious from these images however is the way the land on either side of the ridges slopes away at a constant slope producing a hard edge along the ridge itself and an unrealistically constant gradient on either side. To solve this my next step was to exchange the linear distance function used up to this point for a hermite interpolation that provides a smoother blend between the points at the ridge top and those at the bottom of the slope. The difference can be clearly seen on these two simple graphs:

You can see the smooth-step graph on the right hand side forms a far more natural shape for the hillsides and because the slope becomes more gradual towards the top (the right hand edge of the graph) the ridge line itself is wider, smoother and far less like a knife edge.

Finally to make things a bit more interesting again let’s add some higher frequency perturbations using a ridged fractal similar to that from our original homogenous landscape but at a much lower amplitude just to add variety over our too-smooth slopes:

This is finally starting to look a bit more like some mountains although there is fairly obviously still a very long way to go to achieve something that really does look realistic – there are some undesirable artifacts where ridge influences meet for example producing fairly artificial looking gulleys, but it’s certainly promising enough I think to continue the experiment.

PS: Hope you found this interesting, it certainly helps me get my head around the process documenting it in this much detail – constructive feedback and comments are as always welcomed!

Planetary Basics

I thought maybe a bit of a description of how Geo represents it’s planetary bodies might be interesting, it will nail it down in black and white for me if no one else anyhow.

As procedural landscapes have been an interest of mine for many years it’s no surprise that I’ve played about with many little projects over those years looking into different techniques and algorithms. All of these earlier projects however suffered from the same limitation that is prevalent in many of even the latest games today – they represented their landscapes as simple two-dimensional grids of altitude values known usually as height fields.

Now height fields are pretty much ubiquitous with landscape rendering because they are simple, contain a very high degree of coherency and thus lend themselves to a variety of high speed rendering and collision algorithms that take advantage of those facts.

Height fields however do by their nature however suffer from the problem of being two dimensional with each point on the landscape represented only by a height value. This precludes any form of vertical surface on the landscape such as a cliff face along with concave constructs such as overhangs and caves, lending height field based landscapes a distinctive simplicity.

There have been various extensions applied to basic height fields to work around these limitations at least in part, games such as Crysis use selective areas of voxel based geometry to produce overhangs for example and many games employ sections of pre-built geometry such as cave or tunnel entrances that can be placed in the world to hide the join picture-frame style between the height field landscape geometry and the non-height field based interiors.

Rather than try extending something I have played about with previously however I thought it would be more interesting to try something completely new, so with Geo I’ve chosen to represent entire planet as single voxel spaces from which geometry is generated. These voxels are represented using a fairly standard octree structure so they can be rendered at differing levels of detail as required – see Wikimedia amongst others for more information on Octrees and Voxels

A simulated Earth for example starts with a single voxel containing the entire planet which is rendered using geometry generated with the marching cubes algorithm over a 16×16×16 grid. The values fed in to the marching cubes come from a planetary density function which essentially evaluates for a given point in space how far it is from the surface of the planet; +ve being above the planet surface and –ve being below. When the marching cubes process these density values the iso-surface produced at the zero density value then gives the geometry for the surface of the planet – probably a couple of thousand triangles for this lowest LOD level.

As we get closer to the planet the voxel is split into eight children at the next level down in the octree and geometry generated for each of them for rendering and so on. What happens first of course is the viewpoint position is used to determine which voxels from which levels of the octree should be rendered – voxels close to the viewpoint are taken from deeper in the octree and so produce higher fidelity geometry while those further from the viewpoint use voxels from lower in the tree and thus produce lower fidelity geometry. A natural level of detail scheme thus falls out of the tree traversal ensuring as uniform a triangle density as possible is produced on screen.

Other optimizations such as culling voxel bounding boxes against the view frustum helps reduce the amount of geometry rendered and an asynchronous geometry generation system is employed so rendering is not slowed down while the CPU generates geometry from the density field – voxel geometry simple appears as it’s generated in a closest-first type fashion and cached in memory for future frames. There is plenty of scope for more advanced visibility culling techniques such as hierarchical Z buffer tests but this will do for now.

While initial experiments are based around basically Earth like planets, the real power of this approach is that it can represent any form of geometry at all, so half-destroyed blown apart planet husks are entirely possible along with sprawling cave systems, vertical cliff faces and even gravity defying floating rock precipices – there should really be no limit.

Anyway, that covers the basics and is probably enough for now – take a look at the screenshots to see how it’s coming along but bear in mind please that it is still very early days so apart from the major feature omissions such as atmospheric scattering, vegetation, water and infrastructure there are also many major glitches with the code that still need ironing out.

– John

Introduction to Geo

‘Geo’ is a project that I started after growing a little frustrated with my previous project ‘Isis’. Isis was an attempt to create a virtual island and explored such techniques as clip-maps and geometry shaders, over time though I realised that a single island was too limiting and I would rather have a complete planet to create procedurally – and if you are doing one planet you might as well do more so Geo is an attempt to produce a simulation where you can move from being in space moving between planets right down to being at ground level walking around.

I’m still focussing on offline procedural generation as I want to create environments at a fidelity and diverseness that simply cannot be generated on the fly at runtime but with the amount of data needed for potentially thousands of planets a caching and background generation system needs to be built in as a core part of the systems architecture. The first version uses worker threads on the same machine (via a task pool system) but I’m attempting to design it in such a way that a distributed work system can be utilised to provide far more computing power for the procedural environment generation.

Other major differences between Isis and Geo is that I am not using clip-maps this time for the terrain as I want to experiment with fully 3D terrain meshes rather than being limited to a traditional (and simplistic) height field, my planets are thus constructed using a terrain density function evaluated using the marching cubes algorithm with individual sections of geometry stored and rendered using an octree allowing any possible shape to form such as vertical cliffs, overhangs and cave systems. I have a vision in my mind of a blasted half-planet, it’s entrails flowing into space the result of some cataclysmic impact, would be nice to get there if I can.

One of the major problems to overcome when trying to simulate such a large environment of course if the issue of numerical precision. Single precision floating point numbers as are typically used for 3D graphics can only store about six significant digits and so quickly run out of precision when you are talking about representing features all the way from the centimetre scale right up to astronomical units and beyond. To combat this, Geo uses a number of different co-ordinate spaces each with it’s own origin and scale – planetary bodies are generally represented at the kilometre scale with the centre of each cell in the octree structure providing a convenient origin for it’s content. This way as we progress down the levels of detail the range of values naturally diminishes and accuracy increases. Flying between planets in a solar system however may require representations at the astronomical unit scale with the origin at the centre of the solar system – managing the smooth transition between these different co-ordinate spaces is paramount to eliminate popping/snapping artifacts and ensure maximum accuracy in the geometry rendering.

There are many other problems both large and small presented by attempting a simulation of this scale but the whole point of the exercise is to experiment and without the pressures of work the project can drift quite happily at it’s own speed and the focus shift with my mood. With that in mind progress so far has been fairly slow as I scrabble to find the odd half-hour here and there to work on it but what is there so far I think is quite promising. I’ve added some early screen shots to the Gallery section to record it’s state so far – the lack of atmospheric scattering, dynamic shadows and surface detail makes them a little on the bland side but I want to record progress and it has to start somewhere.

Anyway, that’s probably enough for now – have a look at the screenshots and subscribe to the Geo Blog RSS feed if you are interested and want to be notified of future updates as they get posted. Use the forums to leave feedback too if you like – it’s always good to hear from the community.

– John

It’s all in the detail…

Firstly thanks for the positive comments on my last post – as any journaler knows it really does make a huge difference to morale to know people like what you do enough to bother posting some feedback. After the initial work on flora outlined last time, I decided to take a bit of a break from that and look at the landscape texturing. This was done using a 524288 x 524288 (512K^2) clipmap based texture but even at that resolution my 40 Km square island was looking a bit Nintendo-64 for my liking…the ground texture was blurry and lacking detail as can be seen in these screenshots:

(Note that I’ve turned off the grass effect here for clarity)

not only this but it took a long time to generate texture pages which made moving around and changing things a bit painfull. The original desire here had been that one big unique texture would allow maximum freedom as you can put anything anywhere without fear of tiling artifacts or running out of memory – the memory footprint is constant. Unfortunately though I came to the thinking that this benefit wasn’t actually worth the sacrifice in resolution and data generation time so I’ve been looking at replacing it with a more traditional tile based system. The art of course is to somehow do this without the nasty carpet-like tiling so often seen. I am still using a 128K x 128K clipmap based texture for the height and normal data but to this I have added blending weights for up to sixteen landscape tile textures which will represent the various grass, dirt, sand, rock, snow effects or whatever. Hopefully sixteen will be sufficient as even with this it’s taking 12 bytes per texel.

During rendering, the landscape vertex shader unpacks these weights from the clipmap texture and passes them on so the GPU will interpolate them at the per-pixel level and the pixel shader can use them to read from an array of landscape tile textures for all non-zero wieghts, blending the read texture colours together for the final result. All these are blended onto a base texture (currently grass) so in effect there are seventeen actual textures in use.

Below are some screenshots of the new system, both performance wise and memory wise it’s quite similar to the old one but it should be pretty obvious even to the casual observer that there is significantly more detail present – I’m working with a scale of 1 cm per texel for now:

The first version of this I had running used (512 x 512) landscape texture tiles which I had ‘borrowed’ from a released game, but even with my most careful fBm based blending between textures I found the tiling artifacts caused by the repeating texture detail most unsatisfactory. To improve this situation I employed the texture synthesis class I wrote to help produce unique texture detail for the old massive colour texture system I was replacing. Using this I produced new (2048 x 2048) tile textures using the original (512 x 512) ones as exemplars. Although this uses more memory (we’re talking about 40 MB for the texture array even with BC1 compression), for the main landscape rendering I am happy with this budget, especially as it’s not that different from the space used by the old clipmap system. The key benefits though are that it not only reduces the frequency of the tiling artifacts by a factor of four in each axis, it also reduces the visibility of the artifacts as the synthesised texture is by it’s nature more chaotic.

As an example, below is one of the rock texture that I’m working with. On the left is the (512 x 512) ‘borrowed’ original, in the middle is a (2048 x 2048) version produced by simply tiling the original while on the right is a (2048 x 2048) version produced by the texture synthesiser:

as you can see, the middle tiled image shows a strong repetitive pattern very obvious when used in situ. By comparison, the synthesised image on the right while not exactly the same as the exemplar exhibits pretty much the same features but in a completely chaotic manner eliminating the tiling artifacts. Another bonus is the synthesised texture maintains the tiling property of the original so can be tiled as needed across the landscape.

Synthesis is carried out as an offline process during program startup with the results being cached on disk for subsequent runs. A lengthy pre-processing pass is carried out on the examplar (takes about an hour currently for a 512×512 exemplar on a single core HT machine) which generates an exemplar reference file which can then be used with various randomness parameters to generate different larger synthesised texture in about five minutes. The algorithm was originally adapted from a SIGGRAPH paper where it ran on a GPU so it could be sped up significantly but I find it easier to understand and debug new algorithms on the CPU first.

As the blend weights for the different textures are stored with the height field samples, they can be changed every 30cm or so which I think will turn out to be adequately fine granularity for good looking landscapes, and the fact that all sixteen tiles can be blended with various weights should mean no hard edges where path meets grass for example.

The other less major change in this version is a new grass texture. The one shown here is from an old nVidia sample which I think is better than the crobby one I had knocked up myself – I’m still not 100% happy with it but at least it’s an improvement.

Anyway, I’m quite happy with the end result. The island now has much finer ground texture detail for about the same memory and processing footprint and while it’s lost slightly in flexibility it’s gained massively in visual appeal so I count that as a win.

Blooming Flora

(Originally posted Saturday, July 12, 2008)

It’s been a couple of weeks but in the odd hour I’ve managed to steal here and there I’ve been working on the Island’s flora rendering, namely trees and some grass. I’ve been working on a few systems, but the main ones are the leaves for the trees and some grass for on the ground, the leaves are working pretty well now but the grass while good from some viewpoints isn’t as good as I would like, still it’s better than nothing.

I’ve uploaded a whole bunch of new Screenshots and a couple of New Videos showing off the various improvements I’ve made recently, click on one to have a look to see what I’m talking about.

There are currently three different types of tree (Black Tupelo, Weeping Willow and CA Black Oak) but as they are defined using just a handful of parameters in an XML file I hope to add some more soon. Ultimately I want to do an interactive editor tool for tree creation to make the whole process easier and more fun. LODs are supported on the trees branches/trunks with most having between five and eight LODs depending on the tree definition while the leaves use a continuous LOD scheme so close up you get all the leaves rendering while at 800m or so distance you get a minimum of only 20% of the leaves. Individual leaves blend in quite smoothly as you approach the tree making the transitions fairly seamless. Both the trunk and the leaves sway in the wind using a noise texture and hash function in the shaders.

The grass is made up of about 8000 instanced blades created on the geometry shader and uses the height and normals from the clipmap to automatically follow the contours and light appropriately, they also blend in over distance and sway in the wind producing a reasonable effect. I think it would benefit massively from better grass textures than my art skills allow so I’ll need to go on the hunt for some I can steal I think ![]()

In line with the whole point of this project, I’ve been trying out some techniques I haven’t played around with before. First is instancing which could be done in DX9 but has received a bit of an overhaul in DX10 making it even more useful. Key to the DX10 improvements is that you can now access the index of the primitive and instance from within your shaders using system semantics, enabling some funky effects to be done entirely in shader code.

One example of this is the LOD system for the leaves on the trees – by passing in a number of leaves to render to the geometry shader it can use the index of each leaf’s primitive to set the alpha so leaves appear to fade in as they are added to the drawn list rather than popping.

It’s very cool that I can draw *all* the tree branches and trunks with just *one* draw call, all the leaves with another and all the grass blades with just one other – all that visual prettyness with just three draw calls. Smart.

Instancing is of course great for performance, offloading almost all the work from the CPU and really letting the GPU do it’s thing. I’m constantly amazed at just how fast these DX10 cards are (I’m using two different flavours of GeForce 8800 GTS, one 320MB and one 512MB) when given data like this. It was only when I added some metrics code that I realised I was throwing over two million tris at the thing and it just lapped it up. Amazing.

Another new feature for me is the use of alpha-as-coverage which is a technique where the alpha value of fragments is used as the coverage information for multi-sample anti-aliasing rather than for actual alpha blending itself. This gives you a sort of dithered alpha style effect which is inferior to actual blending but has the massive benefit of being draw order independent, so I can render my grass and my leaves in any order I like and still get fuzzy edges and fades.

The final new addition to the Island is a fairly simple Bloom effect to add a bit more atmosphere. This is an old-school low dynamic range effect that takes the brightest areas of the screen, blurs them out and then adds them back on top to make those areas glow. Originally I thought it would give nice sun glows through the trees and while it does add a little glow in this case, it’s far more noticeable on the more distant horizon where it produces a nice almost dream-sequence glowy haziness which reminds me a bit of the Oblivion landscapes. Not sure it’s the effect I’m after but it’s quite nice none the less.

Anyway, enough talk, check out the screenshots and videos. If you can spare a minute to let me know what you think that would be great too – comments as always are most welcome.

Somewhere for island squirrels to live

Taking a break from the wet stuff, I’ve decided to spend some time looking at adding some trees and other flora to the island. After a bit of Google research, I found what appears to be one of the definitive papers on this subject, “Creation and Rendering of Realistic Trees” by Jason Weber and Joseph Penn. I frequently struggle with such research papers as so often the authors give you just enough information to show how clever they are but not enough to duplicate or extend their work without having to pretty much do all the research again; so it was with a little trepidation that I approached this one, but fortunately Weber and Penn appear to have accomplished that rare feat of producing a paper that is clear and fairly easy to understand. I applaud them.

Having a procedural bark or leaf texture is a bit of a stretch at the moment so I have ‘borrowed’ some from the excellent SpeedTree package – hopefully they won’t mind as this isn’t a commercial project and eventually I hope to replace them with something that is actually procedurally generated or at least synthesised.

Anyway, I’ve uploaded some new screenshots to show the initial results of my efforts – three tree examples showing a CA Black Oak, Black Tupelo and Weeping Willow. The parameters are taken roughly from the Weber/Penn paper modified slightly as I am trying to adjust the algorithm as I go to make it more suitable for runtime use. I want to port some more tree types to give more variation and possible even experiment with pseudo-random trees to see what comes out. No need for the island to be too realistic now is there?

One thing that is immediately obvious is there are no leaves at the moment – now either I limit my island to being in the depths of winter the whole time or I need to address this. As you might expect, leaves are next on the list of things to look at. The plan for these is to use sprites rather than the geometric leaf implementation described in the paper simply because with the correct texture it should produce reasonable results with much simpler geometry. It also gives me a reason to delve into geometry shaders – an aspect of DirectX 10 that I haven’t yet looked at. The plan is to have a vertex buffer with positions, tints and direction vectors that is turned into quads by the geometry shader for rendering…doesn’t sound too hard but as I’ve never written a geometry shader before it will be a learning experience. I’ll put up some more images once I get it working.

Other bits and bobs that will need doing is some form of dynamic level of detail so the 30,000 triangle tree can be rendered with just a couple of hundred tris/lines at distance and ultimately just as a sprite – I want to have some fairly dense forests so effective LOD is essential. Some wind sway in the branches and leaves would also be good, some sort of noise or turbulence in the vertex shader will hopefully take care of this and should look great once the dynamic shadows go in. Finally some degree of pseudo self-shadowing with a dappling texture might be worth playing with.

Anyway, that’s enough for now, I’m going to go read up on geometry shaders.

Shore-ly it’s not *that* hard?

(Originally Posted Tuesday, May 27, 2008)

All I’m going to talk about today is one little aspect of one part of the world but it’s one of those little features that you think you can just drop in but three weeks later realise there’s just a bit more to it than you thought.

I had the deep water effect in and working but due to the way it works all the waves move in the same general direction which I thought was a bit poor – wouldn’t it be great if there were waves that washed up against the shore a bit more like they do in real life? I had a brief play with distorting the primary waves to achieve this but the stretching and warping effects were truly horrible so I soon ditched that idea. Instead, I came up with storing a second channel on the water depth field texture that stores the distance from each point in the field to the closest shoreline. This distance could then be used in conjunction with an animated constant to produce waves in the water vertex and pixel shader that moved towards the shore regardless of the shore’s orientation.

Turns out that modifying the shaders to produce the waves was the easy part, the generation of the required “distance to shore” values on the other hand turned out to be a complete pain in the proverbial. It’s easy enough to search from a water point to find the closest non-water point representing the shore, and this does indeed work fine for large bodies of water, but if you simply do this on it’s own then places where the water area isn’t wide enough for the full wave width (such as in channels and smaller bays and coves) the waves spawn at less than maximum wave distance very noticibly popping into existance at the centre of the water before travelling towards the shore which looks really really stupid. Herein lies the rub.

I’ve spent my spare time over the last couple of weeks trying various methods to see how I could normalise the distance-to-shore values so even in narrow stretches of water the centre of the water was stored at maximum wave distance and points between there and the shore were scaled to fit so waves always spawned properly. Most of these methods involve creating a binary bitmap of the water area and applying various morphological image processing techniques such as erosion and shrinking to try to end up with a ’skeleton’ of points in the centre of each area of water. These points are then all stored at maximum wave distance and the points between them and the shore stored at scaled distances.

The theory sounds simple but in practice it’s not so much, neither the conventional erosion algorithm or the binary shrinking algorithm produced quite the type of shape skeleton I was after and in the end it’s a hybrid style approach that I found gave the best result. It’s not perfect but it’s pretty close to what I want and when in use there are only a couple of problem areas where the coastline is particularly “crinkly”. Anyway, it’s certainly good enough for now so I’m going to move on to other things.

I’ve captured a short video to show the effect in action – it’s a bit subtle and took much longer than I had hoped but I think it’s worthwhile and shore adaptive waves are not something I’ve seen in many water demos, the video also shows the dynamic time-of-day effect…I’ll probably post more details on how that works in the future if it’s of interest.